I have been busy coding improvements to SCM.

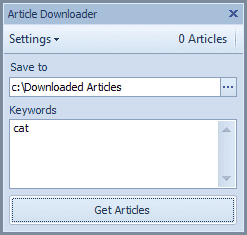

Although still in a really early alpha stage, SCM will soon get its own article downloader.

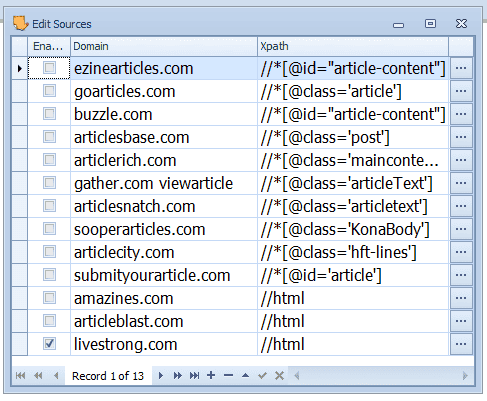

The cool thing is of course that SCM supports your own custom article sources.

Combine your own article sources with the article directory and you get a true article downloader that can grab anything you ever dreamed off.

Scraping articles without xpath

Also, after some intense R&D I have found a way to remove the xpath requirement when adding a new article source.

Now SCM only needs a domain name.

Just like google SCM will read a page and try and figure out what the main content is and scrape that.

In the next version of SCM when you add an article source it will automatically default with an xpath of “//html”.

The practical implication of this is to save time on adding new sites. You only need to bust out the xpath on a site where SCM won't detect content properly.

After all, xpath is pretty terrible. Its hard to understand and not having to deal with it makes SCM an even easier program to pick up and use.

Scraping content in foreign languages?

I have been putting some brain power into the art/science of scraping content of pages in ANY LANGUAGE.

This is quite a task in itself.

I think I nearly have english letter based languages figured out.

I'll need some willing volunteers to try asian languages however!